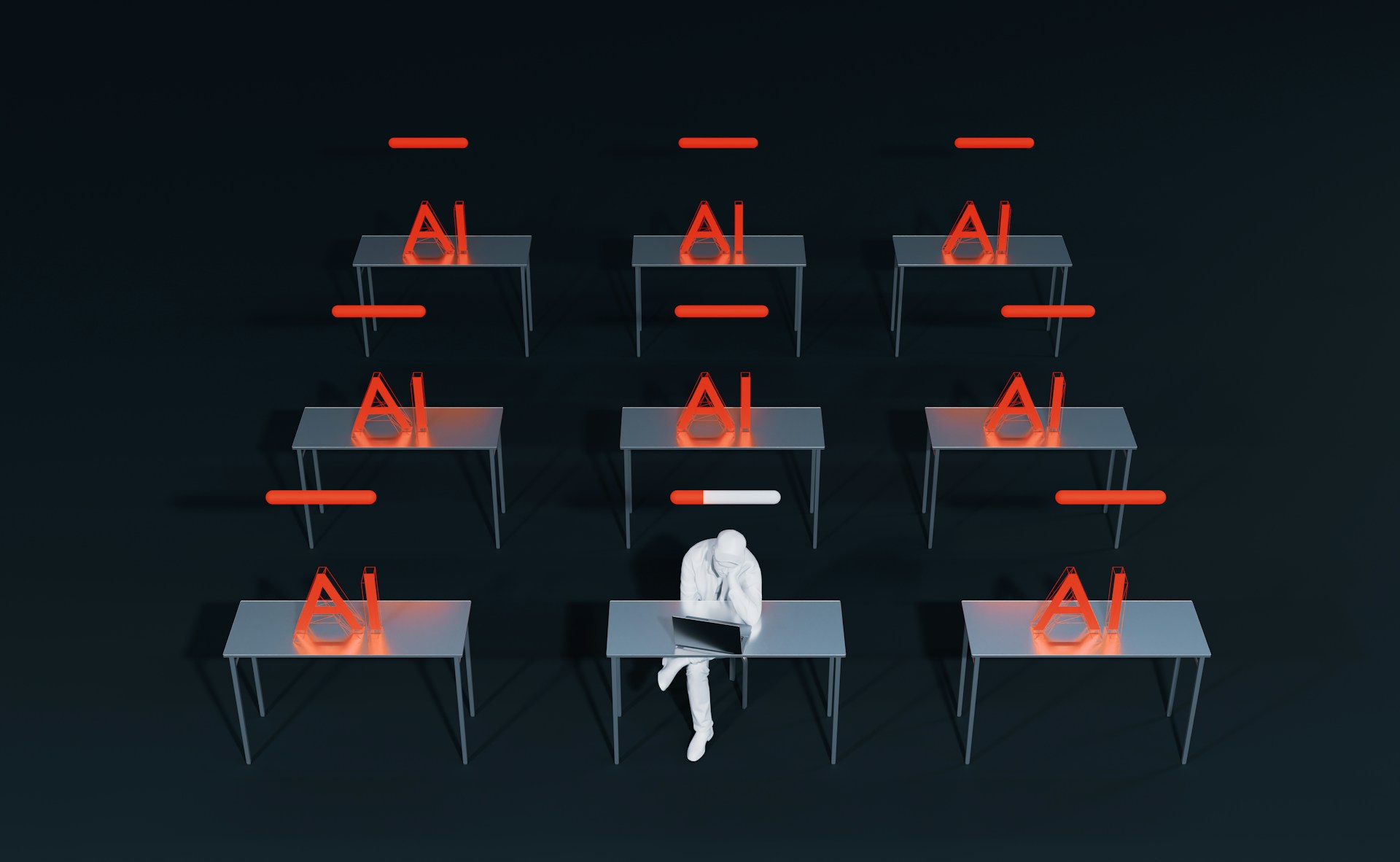

Singapore organisations are accelerating AI adoption despite significant security concerns, resulting in a gap between goals and oversight, according to a study released today by AI security firm TrendAI.

Sixty-seven per cent of Singapore respondents felt pressured to approve AI deployments despite security concerns, with one in nine saying those concerns are “extreme” but said they were overridden to maintain competitiveness and meet internal demand.

Just 29 per cent felt prepared for the speed of AI adoption, and 59 per cent said they are moderately confident in their understanding of the AI legal frameworks.

The study by TrendAI, part of cybersecurity firm Trend Micro, involved 3,700 business and IT decision makers, and included 200 from Singapore.

It also revealed that governance maturity remains limited. Just 39 per cent of organisations have in place comprehensive AI policies, with many still drafting them.

Forty-eight per cent cite unclear regulations and compliance standards as key barriers, showing how AI is often being deployed before the rules governing its use are fully established.

“Organisations are not lacking awareness of risk, they’re lacking the conditions to manage it,” said David Ng, TrendAI’s managing director for Singapore, Philippines and Indonesia.

“When deployment is driven by competitive pressure rather than governance maturity, you create a situation where AI is embedded into critical systems without the controls needed to manage it safely,” he added.

The TrendAI report highlights how governance inconsistencies and unclear responsibility for AI risk are compounding the issue. This has resulted in security teams reacting to top-down AI rollout decisions, which often result in workarounds and the growing use of unsanctioned or “shadow” AI tools.

TrendAI’s earlier threat research released in November 2025 showed how attackers are increasing both the speed and scale of attacks by increasingly using AI to automate reconnaissance, accelerate phishing campaigns, and lower the barrier to entry for cybercrime.

Uncertainty around autonomous AI

Notably, the new report shows that confidence in more advanced, autonomous systems, like agentic AI, is still maturing. Fifty-seven per cent of IT decision makers believe agentic AI can significantly improve cyber defence in the near term, but concerns remain around data access, misuse, and lack of oversight.

According to the study, 42 per cent of IT decision makers worry about the abuse of trusted AI status, and 39 per cent fear AI agents accessing sensitive data, while 36 per cent warn about risks related to autonomous code deployment.

At the same time, 38 per cent say they do not have observability or auditability over these systems, which raises concerns about how organisations can control or intervene once these agents are deployed.

To address these risks, 40 percent of organisations support introducing AI “kill switch” mechanisms to shut down systems in cases of failure or misuse, though nearly half remain undecided.

The lack of consensus underscores a broader challenge: organisations are moving towards autonomous AI without a shared framework for maintaining control when it matters most.

“Agentic AI is moving organisations into a new risk category,” said Rachel Jin, chief platform and business officer at Trend Micro, who added that concerns range from sensitive data exposure to loss of oversight.

“Without visibility and control, organisations are deploying systems they don’t fully understand or govern, and that risk is only going to increase unless action is taken,” she noted.