Intel just took the wraps off its 14th-generation Core Ultra mobile processors, which feature a redesigned architecture that promises more performance, while being power efficient so laptops can run longer on the go.

There is also a dedicated core in the processors aimed at tackling new AI workloads, so PC users can, say, create AI-generated images more quickly than before. That’s the promise, at least.

The new Core Ultra chips, codenamed Meteor Lake, are an important step up for a company that has seen rivals AMD and Apple zoom ahead with advanced chips in recent years.

Intel’s new CPUs are made on the company’s new “Intel 4” manufacturing process, which it hopes will close the gap with rivals in the coming years.

Like the architectural redesign, this much-delayed shift to a smaller node from 10 nanometres to 7 nanometres helps make the new Core Ultra chips more power efficient as well.

Interestingly, parts of these new processors will be made by rival semiconductor foundry TSMC in Taiwan, another first for Intel and an acknowledgement of the complex ecosystem needed to produce the most advanced chips today.

Back at Intel, the refresh design is based on the company’s largest client System-on-Chip (SoC) architectural shift in 40 years, said Tim Wilson, the vice-president of Intel’s Design Engineering Group.

As a result, it is also the chipmaking giant’s most power efficient client processor in history, he told reporters during a briefing at the company’s new Malaysian facilities last month.

To boost graphics performance, Intel has integrated its discrete Arc graphics into Meteor Lake’s graphical tile. Now named Xe LPG (the discrete graphics option will be named Xe HPG), Intel expects a 2x increase in both performance and per-watt performance over older Iris Xe integrated graphics.

Architectural redesign

A key improvement in the new chips are the power efficiency gains that they bring to laptop and portable devices so they can operate longer without needing a recharge.

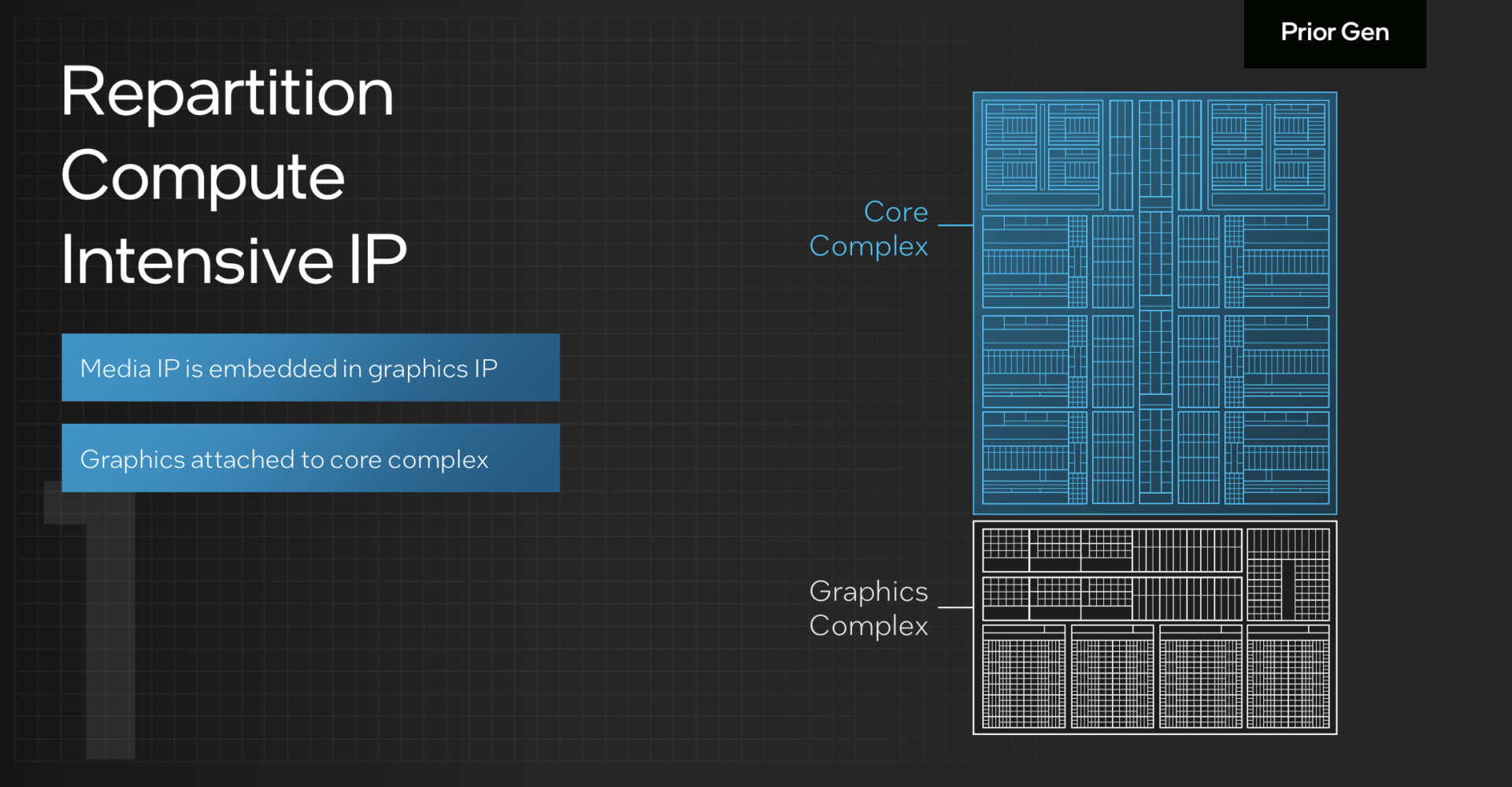

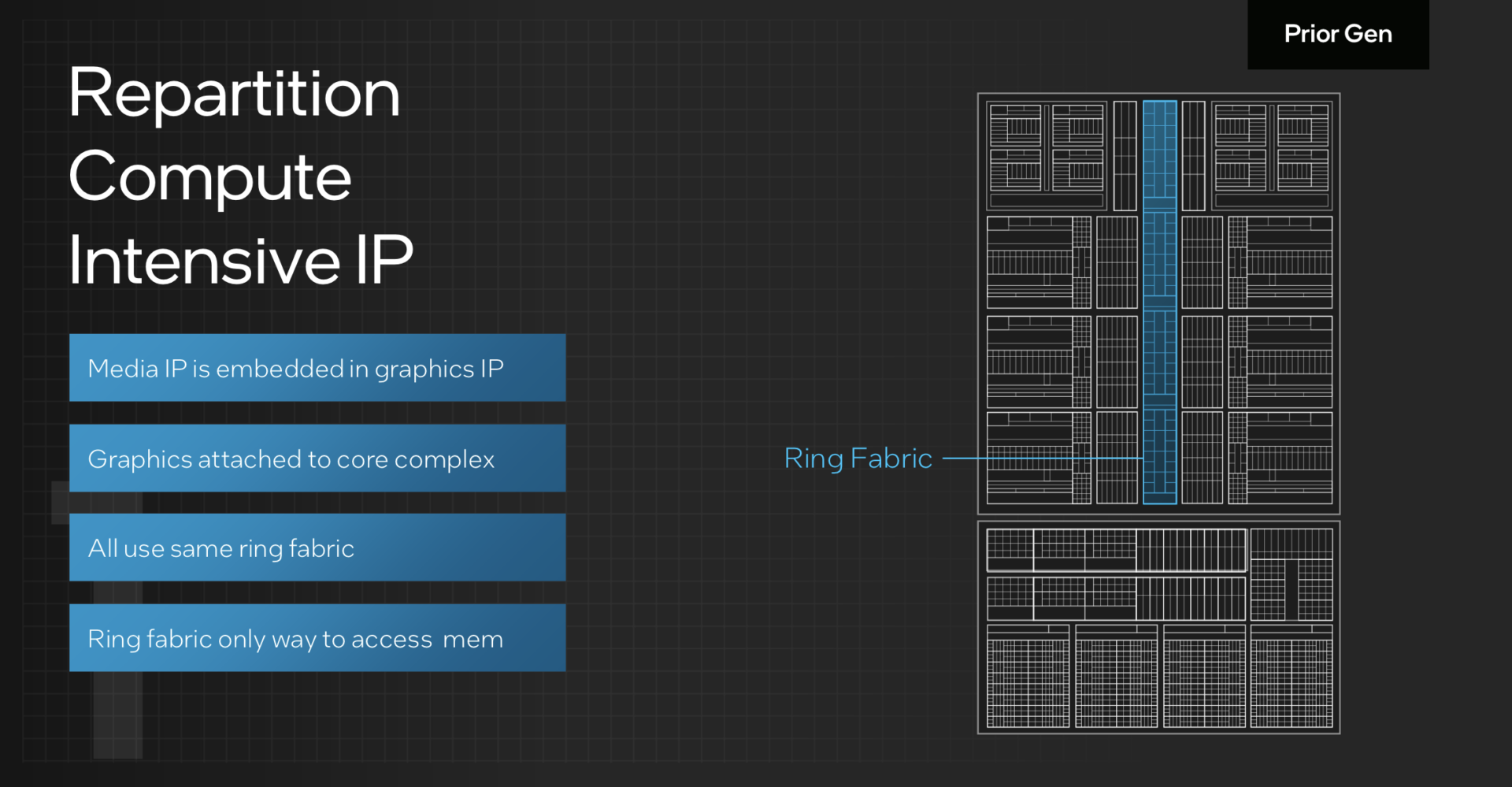

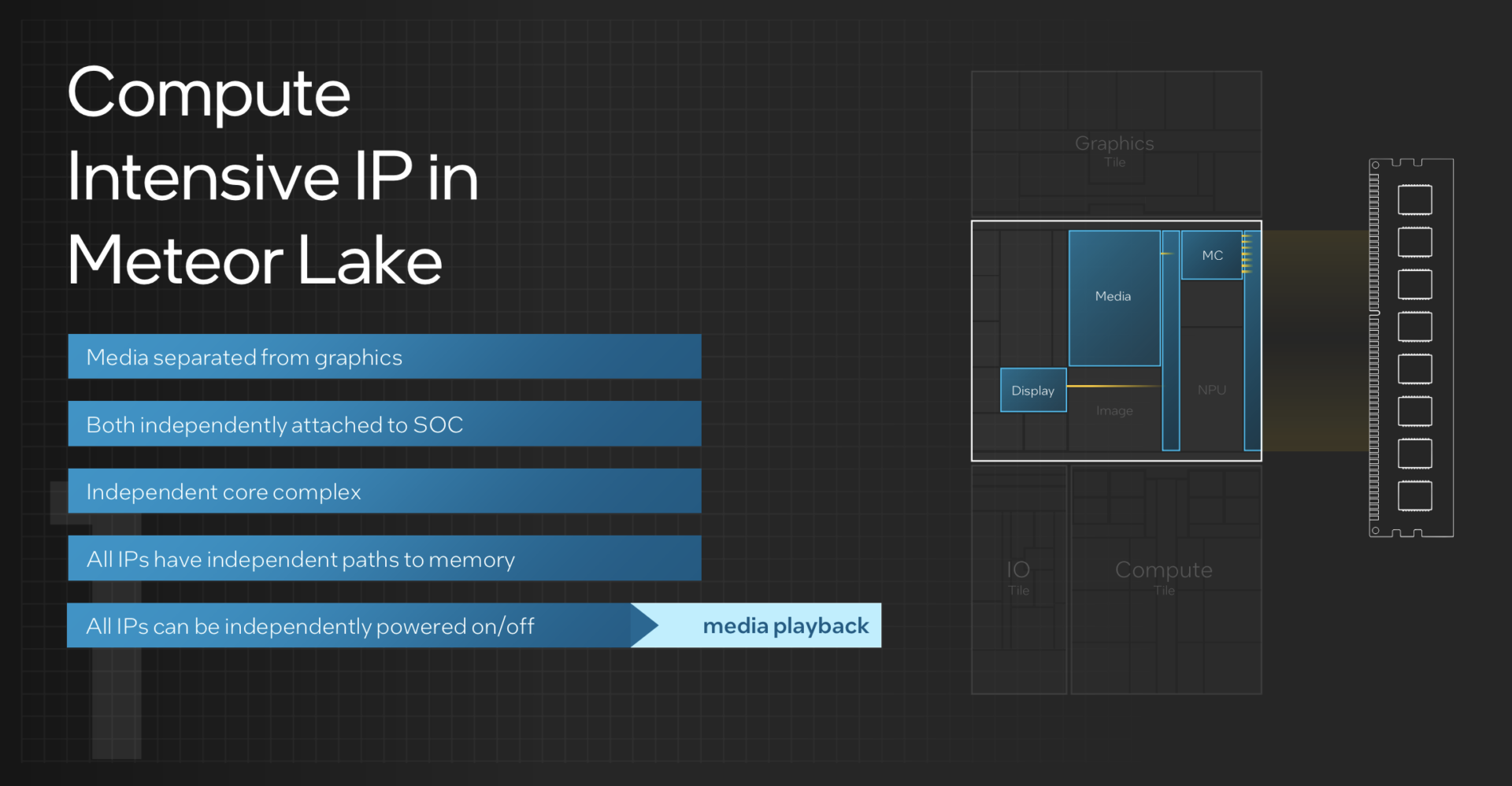

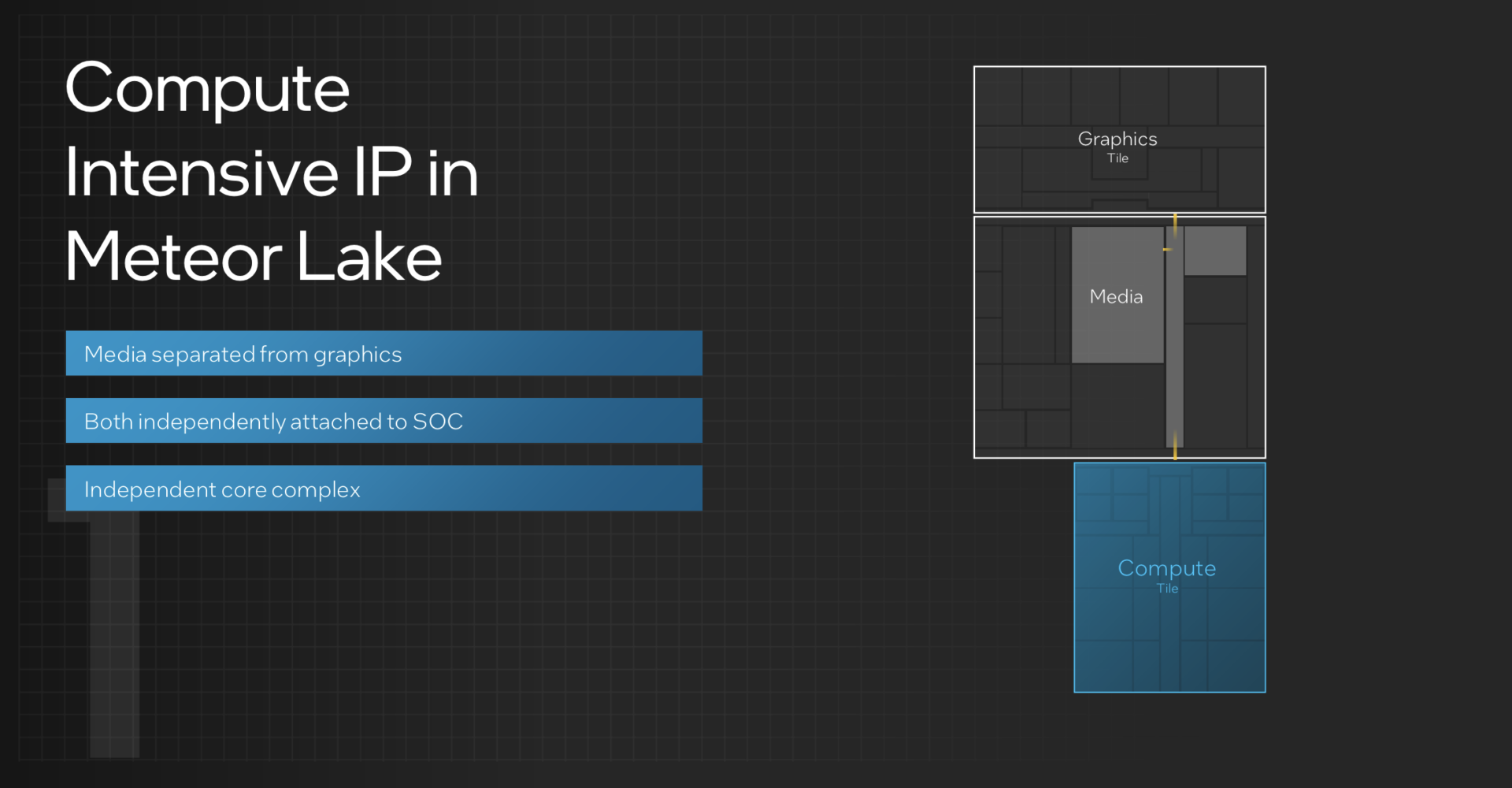

Here, the refreshed chip architecture is critical. In previous processors, a singular processing unit consists of a core complex and a graphics complex.

The graphics complex accesses memory through a ring fabric running through the core complex. Media processing capabilities, like for video streaming, are embedded within the graphics complex.

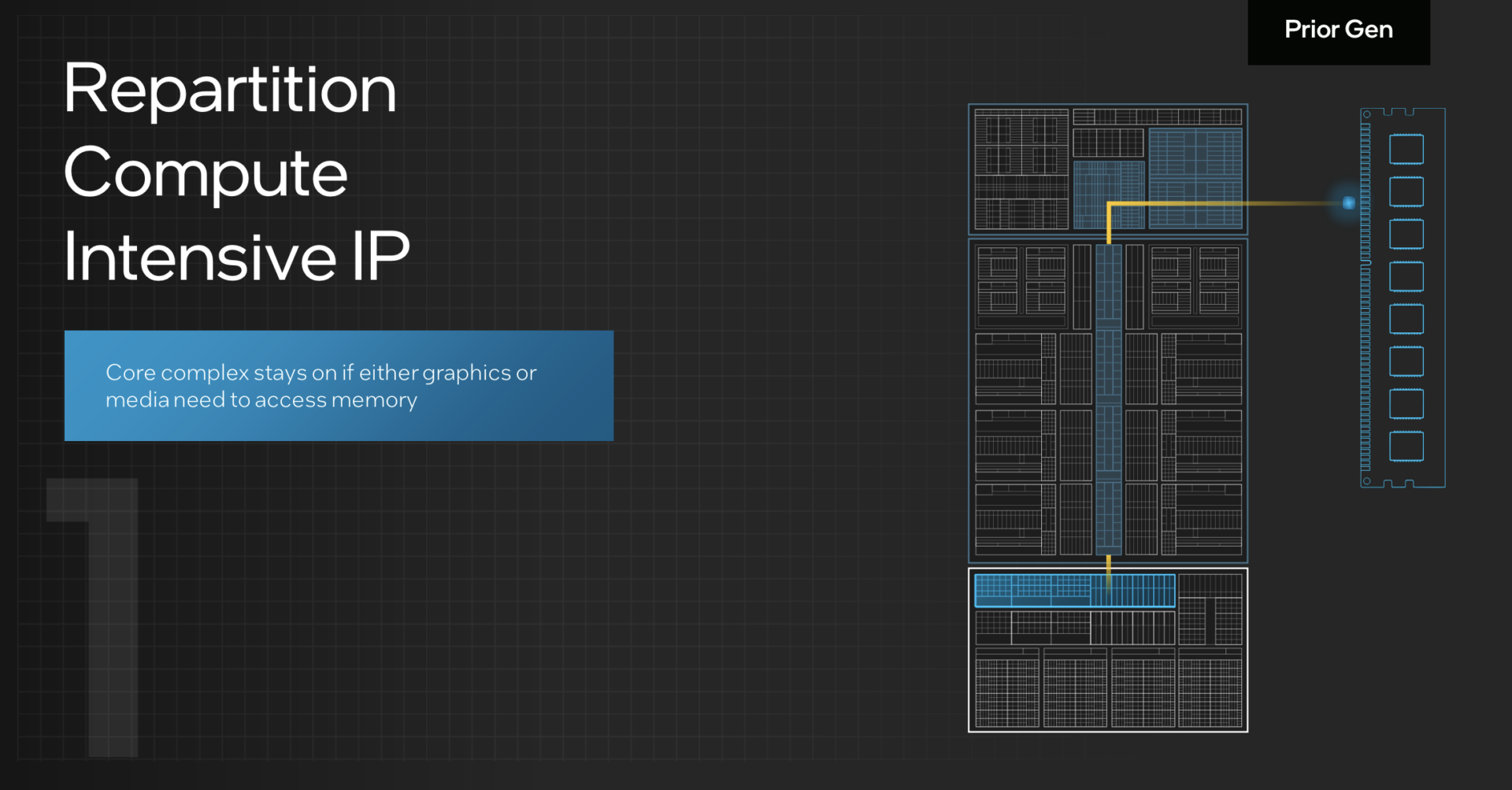

This meant the power-intensive core complex stayed on as long as the graphics complex required memory. At the same time, the entire graphics complex was powered up for simple tasks like media playback.

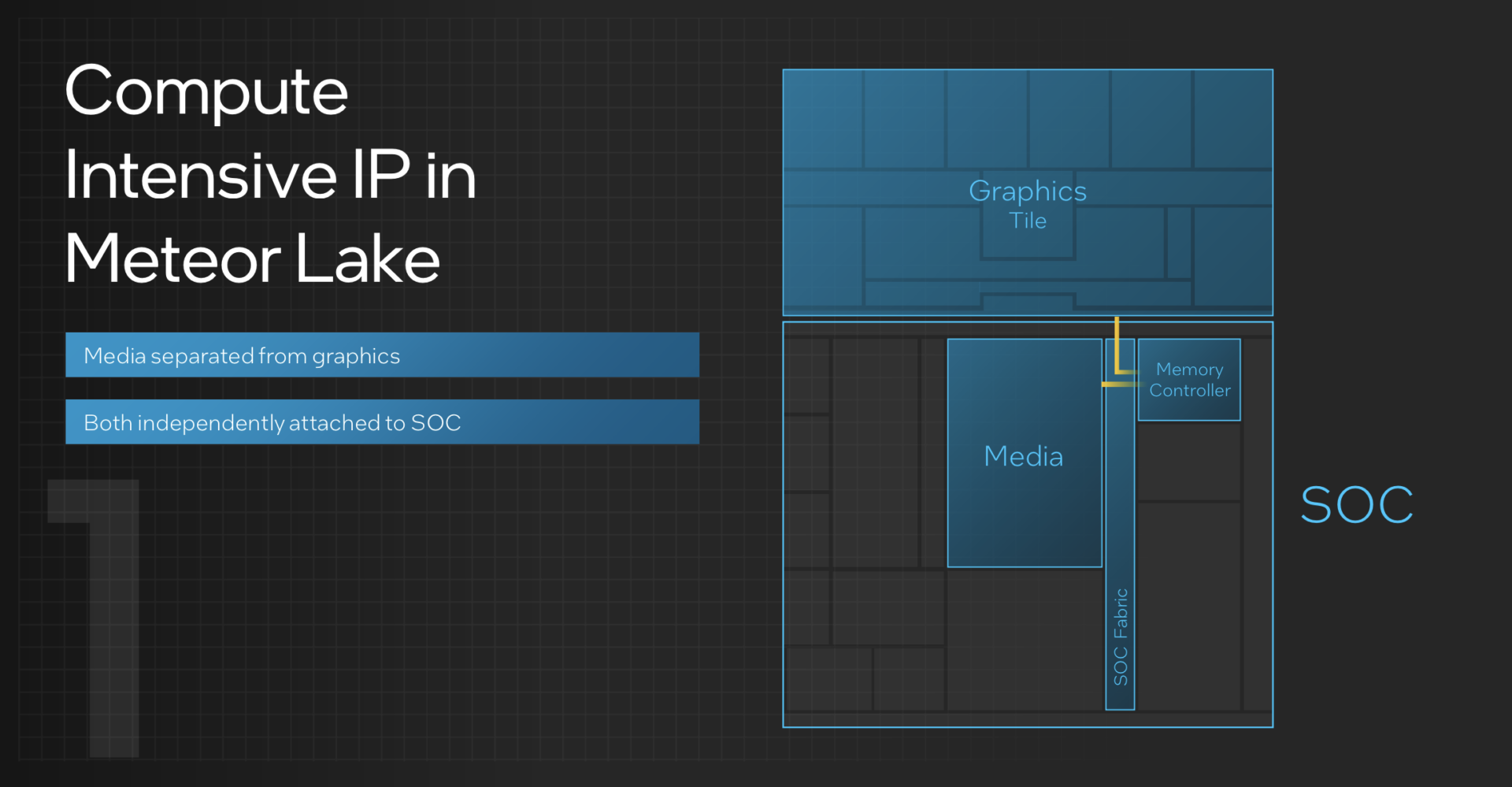

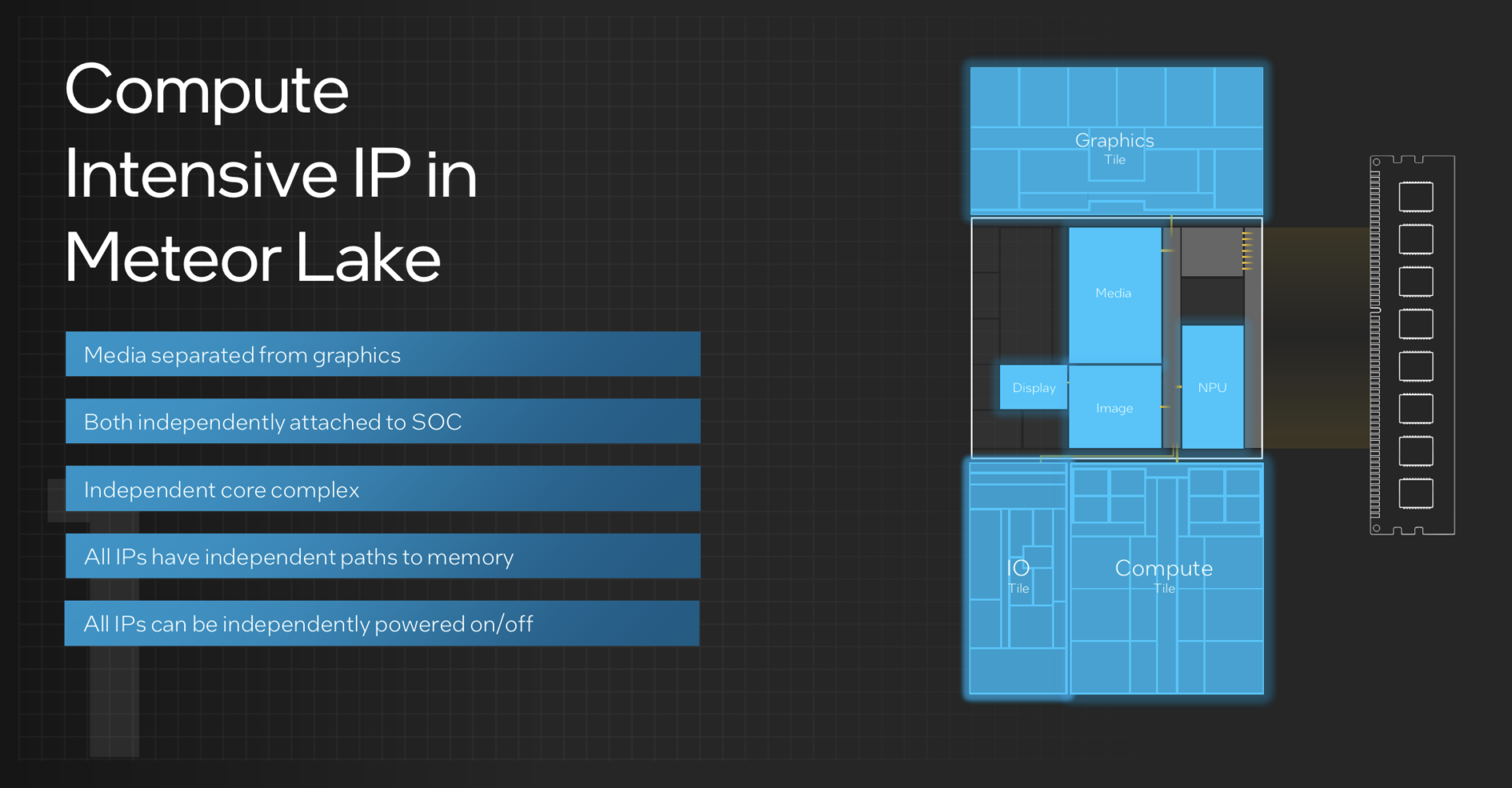

With the architectural redesign, the singular processing unit with its two complexes are now “disaggregated” into separate “tiles”, then integrated into one through Intel’s Foveros 3D advanced packaging tech.

This tile-based design enables media processing to become its own media tile, separate from the graphics tile, parked inside the SoC tile with the memory controller.

In practice, this makes media playback much more power efficient, as the graphics tile can stay off when a user is only watching a YouTube video and not gaming, for example.

Finally, every tile also has its own access to the memory controller, so each of them can be individually turned on and off for maximum energy savings.

Low-power efficiency cores, chiplets from different makers

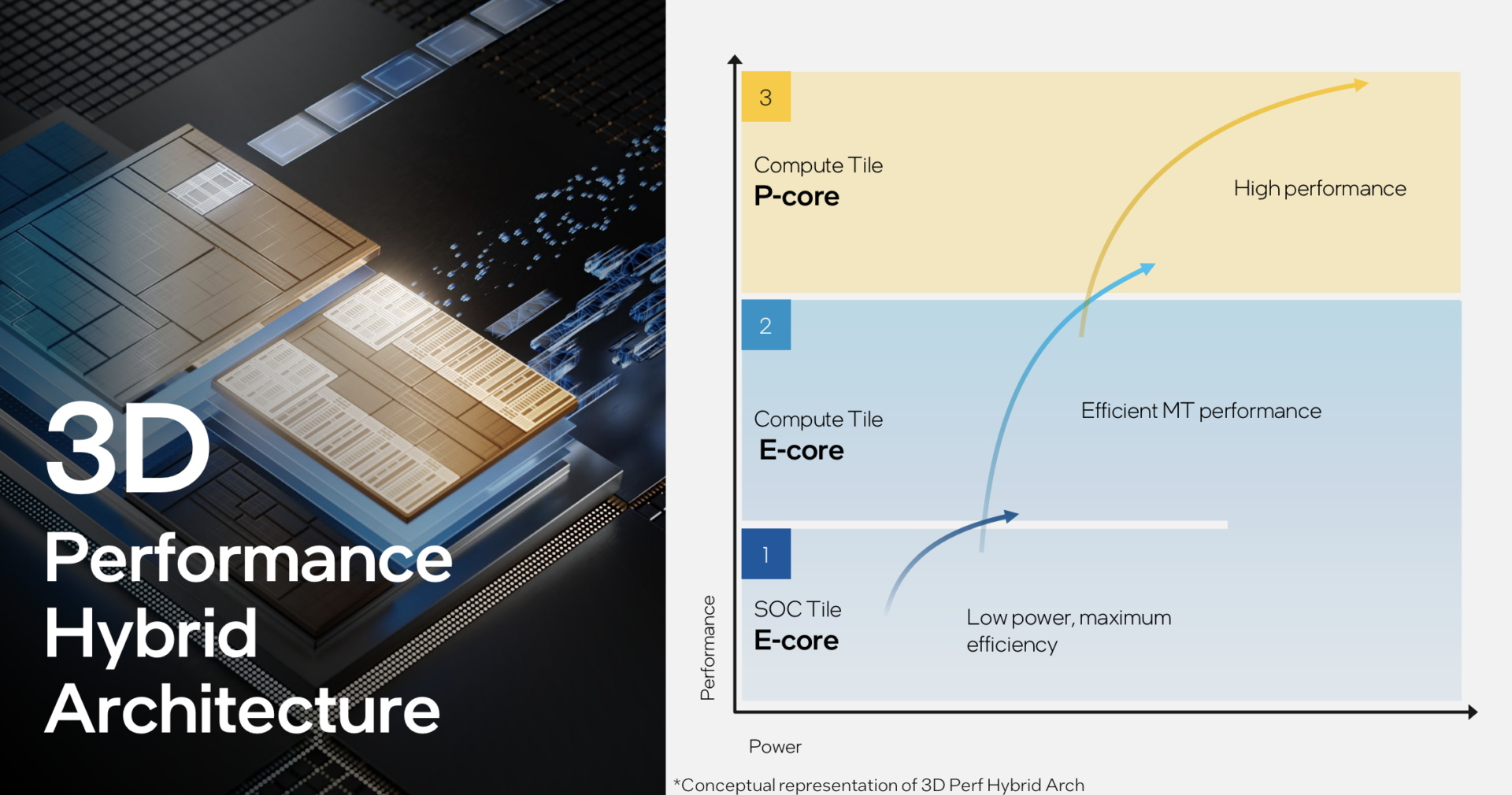

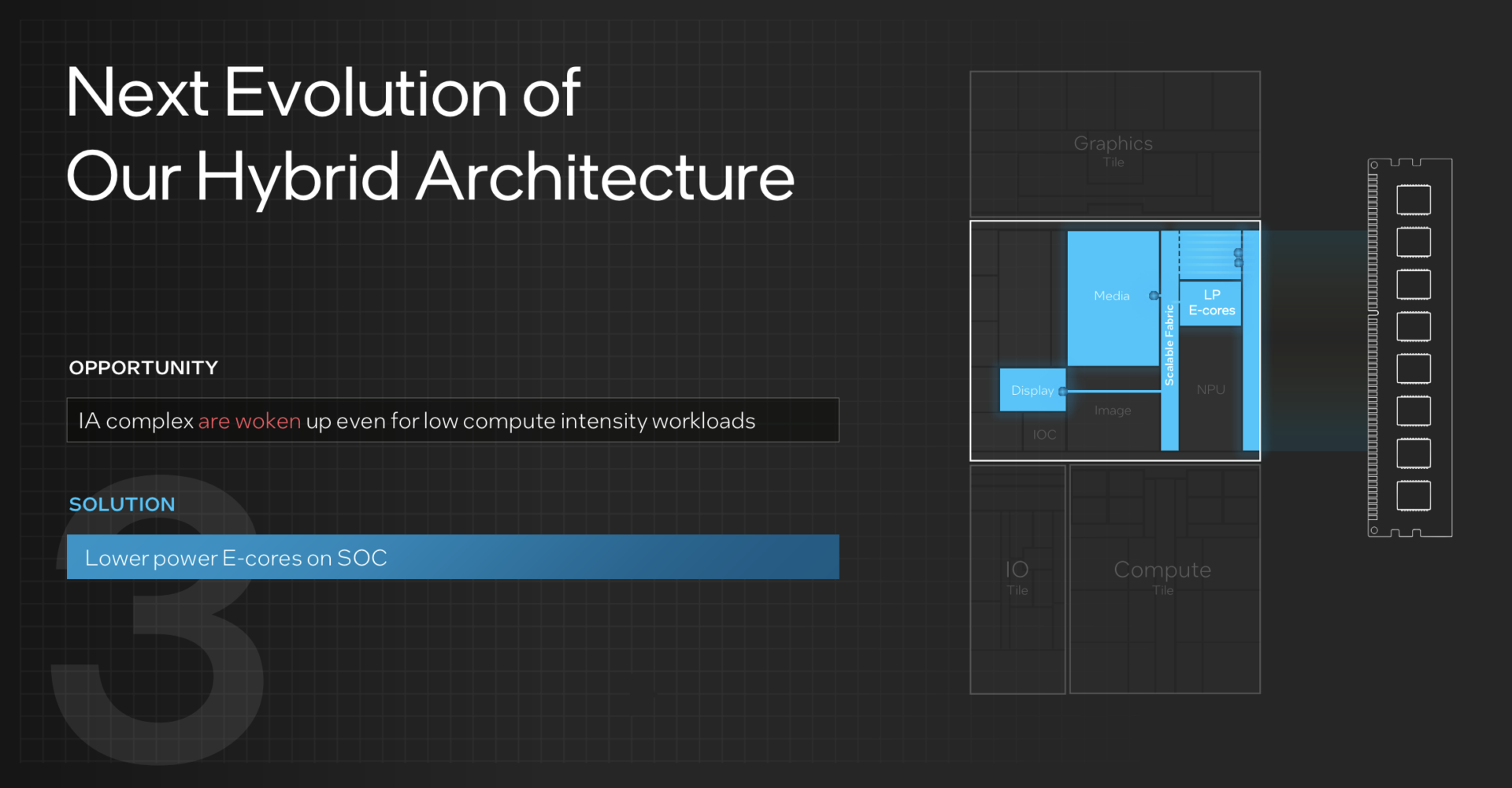

On the new Meteor Lake chips, logic processing design also gets a facelift. Intel’s “3D performance hybrid architecture” (basically, the use of Performance and Efficiency cores instead of multiple similar cores) in place since earlier Alder Lake processors now includes a third core type – a Low-Power Efficiency core (LP E-core).

Aimed at low-demand workloads like the video streaming as well as continuous low-power AI tasks, the new LP E-Cores will sit within the SoC tile, so the Compute tile is only powered up when more processing heft is needed.

Heavier logic processing needs are devolved from the core complex into a Compute tile, home to the Redwood Cove Performance-cores and Crestmont Efficiency-cores, each with their new microarchitectures.

The shift towards what is known as a “disaggregated” chip design enabled by advanced packaging gives Intel the flexibility to use different foundries for each tile.

Interestingly, only the Compute tile is made on the Intel 4 process. This is on the top left of the chip visualised in the cover image of this story.

The Graphics tile is made on rival TSMC’s 5-nanometre process, while the SoC and IO tiles are on TSMC’s 6-nanometre process.

Neural processing unit for AI workloads

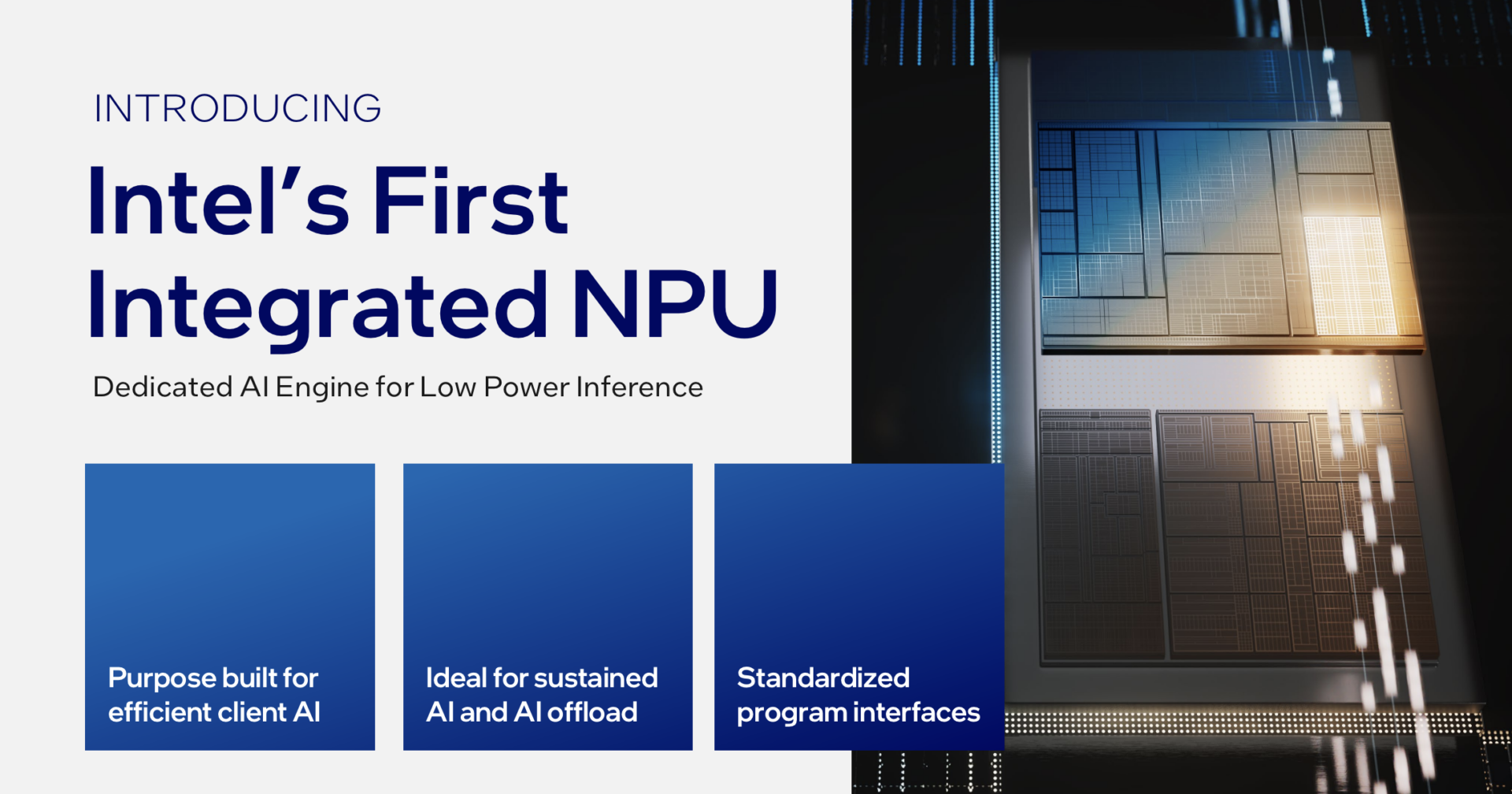

Meteor Lake is also the first Intel processor to pack a dedicated Neural Processing Unit (NPU) for AI workloads.

Intel expects AI workloads to grow in a big way that will exceed the capabilities of centralised servers, and sees its responsibility to de-centralise neural processing from servers in data centres onto end-user devices like personal computers.

The company quotes studies that expects three-quarters of enterprise data to be created and processed outside of data centres and the cloud by 2025.

It also foresees a continued surge in AI inference demand, driven by the mainstreaming of large language models like ChatGPT that would shift computational load from data centres onto client devices.

With the heavy lifting carried out on user devices, AI work such as processing a voice-to-text transcription of an Intel keynote will no longer be affected by network bandwidth limits and latency.

This is because the data no longer has to travel to the cloud for processing and the output then returned to the user’s device.

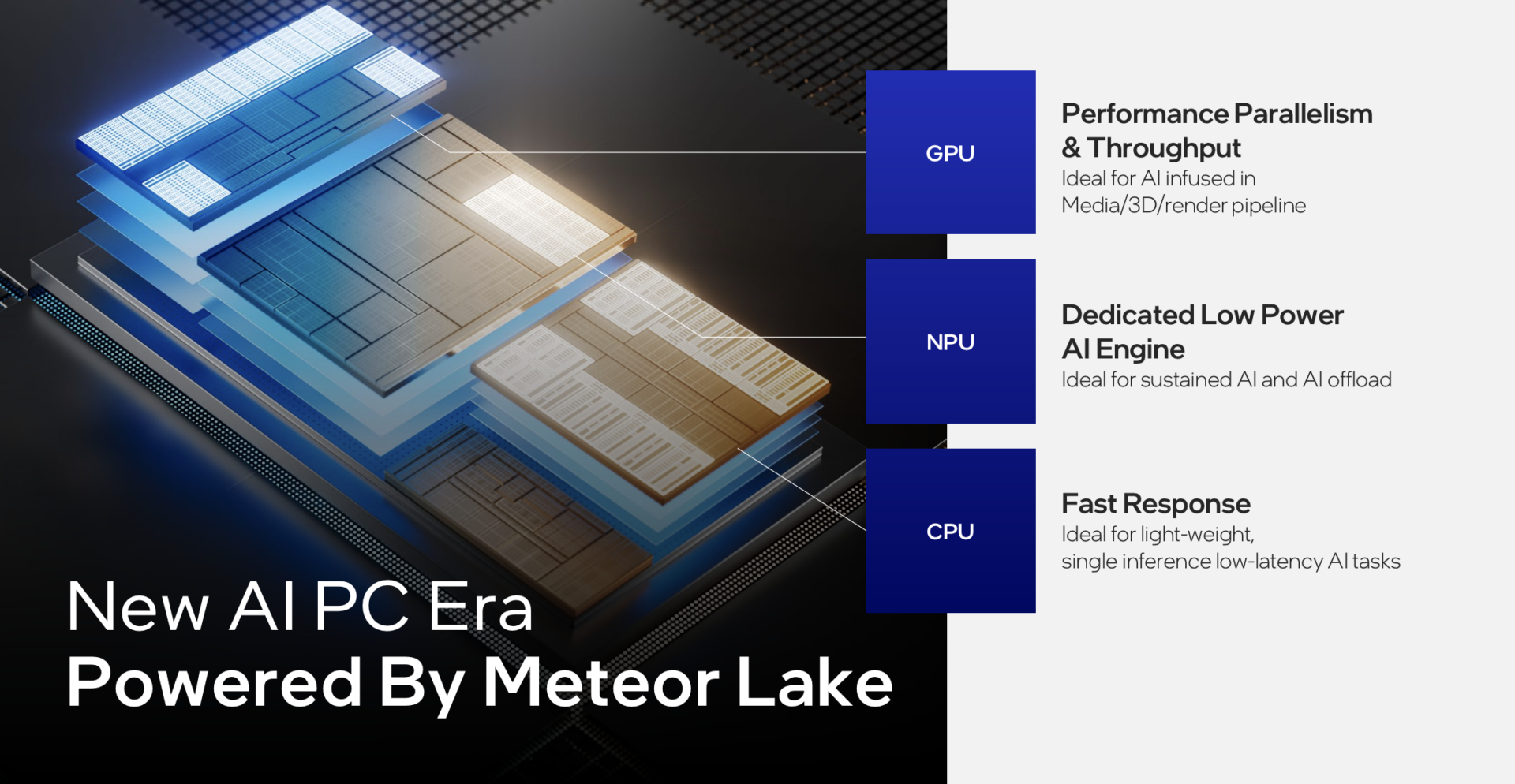

The NPU is expected to complement the CPU and GPU, by handling light, sustained AI work that maximises performance-per-watt. An example would be machine learning workloads by a security camera running 24/7 video analytics for crowding or suspicious persons.

The CPU can then focus on single-inference, low-latency AI tasks, while the GPU handles throughput intensive AI work like Stable Diffusion, which generates images from text inputs.

In its demos, Intel has showed how its NPU accelerates basic Windows AI workloads like improving subject separation while running background blur filters during video calls.

It also helps facilitate Copilot (the Windows equivalent of a Bing assistant) conversations, though the NPU looks like a bet on the future, given nascent use cases today.

Availability

Laptops packing Core Ultra chips are expected on December 14, which is when the performance and efficiency promises that Intel has put out will tested in the real world.